Implement AI Phone Bot: Start Small, Win Fast

Most companies shouldn't implement AI phone bot across the whole contact center. Not first. That's how you burn budget, annoy customers, and end up calling the...

Most companies shouldn't implement AI phone bot across the whole contact center. Not first. That's how you burn budget, annoy customers, and end up calling the pilot a "learning experience" instead of admitting the rollout was sloppy.

Everyone says start with a bold transformation plan. I think that's backwards. According to a 2024 finding cited by Makebot.ai, 70% of AI rollouts fail because teams don't manage change or reskill people properly. The problem usually isn't the voice AI model. It's scope, handoff design, QA testing, and whether your CRM integration and fallback flows work under pressure.

This article lays out a smaller, faster path. Six sections. One practical way to prove value before you scale conversational IVR automation into something your agents, and your customers, can actually trust.

What it means to implement AI phone bot systems

Everybody says the same thing about AI phone bots: they cut costs, answer faster, and take pressure off agents. Sure. That's part of it. Infinite Labs Digital makes that case pretty clearly, framing them as a way to improve customer satisfaction while removing repetitive work from human teams. Fine. But I think that's where a lot of rollout plans start going sideways, because saving money is not the first thing customers notice. Broken trust is.

Picture the wrong call landing in automation. A customer whose order was canceled sees a charge anyway, calls in already irritated, gets a polished synthetic voice, and now the whole thing feels worse. Same with a renewal call from someone who's been ignored twice already. Compare that with a Memorial Day store-hours question at 8:12 on a Monday morning. That's the split. Some calls are easy wins. Some are where bad implementations go to die.

The tech stack itself isn't mysterious. The bot hears speech through ASR, interprets intent with NLU, and replies using TTS. That's the engine room. What matters in practice is simpler: can it handle the routine thing quickly, and can it hand off cleanly the second the call stops being routine?

Nextiva reported that customer support made up 42.4% of the chatbot market in 2024. That number tells you where companies are putting money. It doesn't tell you whether they're choosing sane starting points. Plenty of teams still treat phone automation like a bolt-on gadget, basically a talking FAQ with a smoother voice, then act surprised when callers hate it.

The missing piece is usually use-case selection. Not volume. Not ambition. Learning conditions. Your first deployment should live in a lane with clear intents, few strange edge cases, and an obvious escape hatch to a human being. That's why conversational IVR automation tends to work best in narrow front-door jobs like appointment confirmation, store hours, order status, or basic call routing.

I've seen this mistake more than once: someone looks at the busiest queue and decides that's the pilot because the upside looks huge on a slide deck. Say it's 1,500 calls a week. Sounds irresistible. But if those calls involve billing confusion, cancellations, emotional complaints, and ten variations nobody labeled properly in transcripts, you've built yourself a very expensive lesson.

A smaller queue can teach you more. Five predictable intents beat one chaotic high-volume line every time if you're trying to get an early system working without torching goodwill. If your transcripts are thin, your labels are messy, or callers are angry before the bot even says hello, you're not launching a pilot. You're staging an avoidable failure.

That's what implementation really means here: choosing the first lane based on feedback quality, training-data quality, and trust risk instead of raw call count. Start small enough to learn fast and visible enough to matter — one flow, one team, one handoff path that works every single time. We broke down that approach in Implement Ai Phone Bot Human Centered. So why do so many companies still dump an AI call center chatbot into their messiest queue and hope for magic?

Why most AI phone bot rollouts fail early

Why do so many AI phone bot launches look smart in the planning deck and lousy by the second week?

I’ve watched teams answer that question with a spreadsheet. Biggest queue. Biggest call volume. Fastest savings story. It sounds sharp in a conference room with a neat ROI model on slide 12 and a target date circled for Q3.

Then Tuesday happens. 8:07 a.m. Two hundred callers waiting. The bot gets “Kathryn” as “Catherine,” misses the reason for the call, sends billing people to support, support people to sales, and suddenly one shaky flow turns into a live-fire customer service mess.

The answer is simpler than people want it to be. They start in the wrong place.

I think that’s backwards. If the voice system is still shaky, dropping it into the busiest line isn’t ambitious. It’s reckless.

High volume gets treated like proof of rollout fit. It isn’t. It just means the blast radius is bigger.

That part gets lost because people talk about an AI phone bot like it’s one thing — one clever voice on the line, maybe with a pleasant tone and a decent script. That’s not what’s actually running the call. Nextiva lays it out more honestly: speech recognition, natural language understanding, text-to-speech, routing logic, prompts, fallback rules, agent transfer. Every piece has to hold up at once. One weak link is enough to wreck the experience.

Customers don’t care whether the ASR missed a word or the transfer logic broke after intent detection got confused. They just know your company got harder to deal with overnight.

That impression sticks. Fast.

A sloppy conversational IVR launch teaches customers bad habits almost immediately. They mash zero before the greeting finishes. They repeat themselves early because last time the bot burned 90 seconds and still dumped them onto an agent. They come into the next call annoyed before anyone has even said hello.

Your agents get hit too. Angry transfers. Weak summaries. Longer handle times. Less patience on both ends of the line.

The ugly part is leadership can miss it at first because the dashboard behaves nicely for a minute. Week one might show healthy deflection. Week two might show containment inching up. Those numbers can hide repeat calls, escalations after failed self-service, and brand damage that never fits cleanly into a KPI tile.

I’d argue sequencing does more damage than modeling early on. A mediocre model in a safe lane can teach you something useful. An immature system in your most visible customer queue teaches customers not to trust you.

You can see the better rollout pattern in places where voice automation matures faster. Nextiva points to HR and recruiting use cases growing at a 25.3% CAGR. That’s not because those teams found magic software in some secret back room.

The calls are narrower. Cleaner. More predictable.

Fewer weird edge cases show up when someone is asking about interview scheduling or application status than when they’re calling angry about a double charge, a canceled shipment, or a medical claim that vanished somewhere between systems.

That should change how you launch.

Start where language patterns are constrained. Start where failure won’t torch trust. Start where you can hear exactly what breaks without making your busiest customers absorb every mistake in public.

Your AI voice bot call center deployment strategy should protect trust first and scale second. A smart pilot plan for an AI phone bot doesn’t begin with the biggest queue on the slide deck. It begins with the safest place to learn.

The funny part? The small launch usually teaches you more than the big one ever could. Less applause upfront. Fewer scars later.

AI phone bot implementation sequencing strategy

Week three. Tuesday. 8:17 a.m. A team I worked with had pushed its shiny new voice bot into a high-volume “simple” queue first, because the spreadsheet said that was the quickest win. By lunch, callers were speaking from cars, warehouses, windy loading docks, and one guy who sounded like he was ordering from inside an HVAC closet. The transcripts were junk. The handoffs were messy. Confidence in the whole project dropped faster than the call handle time ever did.

I’ve seen that movie before, and I think too many teams still talk themselves into it.

There’s a reason. A 2024 Makebot.ai finding cited in search results says 70% of AI rollouts fail because companies don’t manage change well and don’t reskill people properly. That number tracks with what I’ve watched happen in real deployments. People treat voice automation like it’s just a routing tweak. It isn’t. It’s a live system trying to understand real speech from real humans who interrupt themselves, mumble street names, switch topics halfway through a sentence, and call while sitting in a parking lot on Bluetooth.

That’s why raw volume is a lousy way to choose your first queue.

Aircall’s basic definition is fine: AI voice bots automate phone conversations with speech recognition, natural language processing, and text-to-speech. Sure. But those pieces don’t improve evenly. TTS usually isn’t where things break. The pain is almost always in ASR and NLU—hearing messy audio correctly, then figuring out what the caller actually meant and what should happen next.

People love saying “start with the easiest calls” or “start with the biggest queue so you can prove savings fast.” I don’t buy it. That sounds neat in a boardroom and reckless in production.

The better opening move is usually lower volume, moderate complexity, and higher patience tolerance.

Not glamorous. Still smarter.

If someone is calling for claims intake, first-level triage, guided qualification, or after-hours issue capture, they already expect questions, pauses, clarification, maybe even a little repetition while details get captured. That gives the bot room to recover when it’s unsure. A balance inquiry line or store-hours request is different. People want speed there. Add seven seconds of clarification and they won’t think “how careful.” They’ll think “why is this thing so bad?”

That’s really the whole sequencing principle: don’t ask whether the use case is simple. Ask whether the use case lets the bot recover safely when it gets confused.

The sequencing model that tends to work

- Phase 1: lower-volume, higher-complexity calls with expected dwell time. Start with intake flows, guided qualification, first-level triage, or after-hours issue capture. These calls generate richer language data without exposing thousands of customers while the system is still learning.

- Phase 2: medium-volume scenarios with clearer intents. Once ASR gets more accurate and NLU confidence stops wobbling all over the place, move into repeatable interactions like rescheduling, order updates, or policy questions with bounded answers.

- Phase 3: simpler and higher-volume scenarios. After fallback behavior is reliable and handoffs are under control, expand into broader conversational IVR automation across common service queues.

The selection criteria you should use

Tolerance for latency. This matters more than teams admit. In roadside assistance intake, a seven-second clarification prompt can be acceptable because callers know details matter. In a store-hours lookup flow, those same seven seconds feel absurd.

Exception frequency. You need enough variation to teach the system something useful. You do not want so much chaos that every third call detonates the flow logic. There’s a middle ground here. Too little variation teaches nothing. Too much teaches panic.

Business risk. Keep your first wave away from payment disputes, cancellations with legal exposure, fraud alerts, and regulated disclosures unless your controls are already mature. Early pilots should fail safely if they fail at all.

Change readiness inside the team. This is where that 70% figure bites people. Agents need takeover scripts. QA needs scorecards for bot-assisted conversations. Product owners need weekly reviews of ASR misses and NLU confusion sets. If nobody has built that habit yet, scaling faster won’t fix it.

I’d also add one practical gut check I wish more teams used: if your supervisors can’t sit down every Friday with twenty ugly calls and explain what went wrong in plain English, you’re not ready for broad rollout no matter how good the pilot dashboard looks.

If you want a practical blueprint for this progression, this AI voice bot call center deployment strategy lines up well with it.

So yes—start small, but not in the way most people mean by small. Start where learning is rich and fallout stays contained. Earn scale after the bot proves it can survive ambiguity without burning trust. Before you pick that first queue, are you choosing the one that makes a slide deck look clean—or the one that gives your bot room to get smarter without hurting you?

How to accelerate refinement without increasing risk

Tuesday, 10:14 a.m. A customer calls to move a service appointment from Thursday to Friday. The bot catches the intent, then mangles the date because the ASR hears it wrong over road noise and a dog losing its mind in the background. Ugly moment. But nothing breaks, because the flow forces a confirmation before any booking changes go through.

That's the whole game, honestly. Not perfection. Containment.

I think most teams obsess over launch checklists because they feel clean: routing works, integrations connect, coverage hours look respectable. Great. Meanwhile the real problem sits there untouched — they're learning too slowly after real callers start talking like real callers.

I've seen pilots get congratulated for exactly that stuff and still trip in production. Ringover calls a phone call bot a voice assistant that simulates human conversation over calls. Sure. Then treat it like conversation, not just software. People call while driving, while annoyed, while hunting for an invoice, while talking to their kid, while standing in a kitchen with a smoke alarm chirping every 42 seconds. That's what you're training on.

The team that handled that reschedule mistake well didn't wait for next sprint planning or some polished Friday review deck. They pulled the transcript that day, tagged the failure, checked the escalation notes, rewrote the prompt, tightened slot validation, and pushed an update the same day. Same day matters more than people want to admit.

Build the first phase like a lab. Guardrails first. Speed second.

What to log on every call

Not just the easy metrics somebody can throw into a dashboard screenshot.

Log the transcript. Log the selected intent. Log the confidence score. Log fallback triggers, transfer reason, final outcome, and whether the TTS response matched policy language word for word.

Say your NLU marks a call as “billing question” at 0.54 confidence, then the live agent ends up processing an address change. That's not noise. That's training data with its hand up.

Track interruptions too. Repeats. Hang-ups. Full bailouts where callers quit halfway through. I'd argue those moments are more useful than top-line containment rates, because “contained” can still mean slow, frustrating, and one bad prompt away from failure.

How to review without drowning in transcripts

Here's where teams usually get weirdly democratic and pretend every call deserves equal attention. It doesn't.

- 100% review of escalated calls during the first phase

- 100% review of low-confidence intents and fallback-and-recovery paths

- Targeted review of successful but unusually long calls, because “successful” can still mean painfully slow

- Daily clustering of repeated ASR misses by accent, phrase, or background noise condition

Bury this in your operating model if you want the pilot to survive: you're not asking whether conversational IVR automation “worked” in some vague sense. You're asking where it broke, how often it broke, and whether that break stayed harmless or actually landed on a customer.

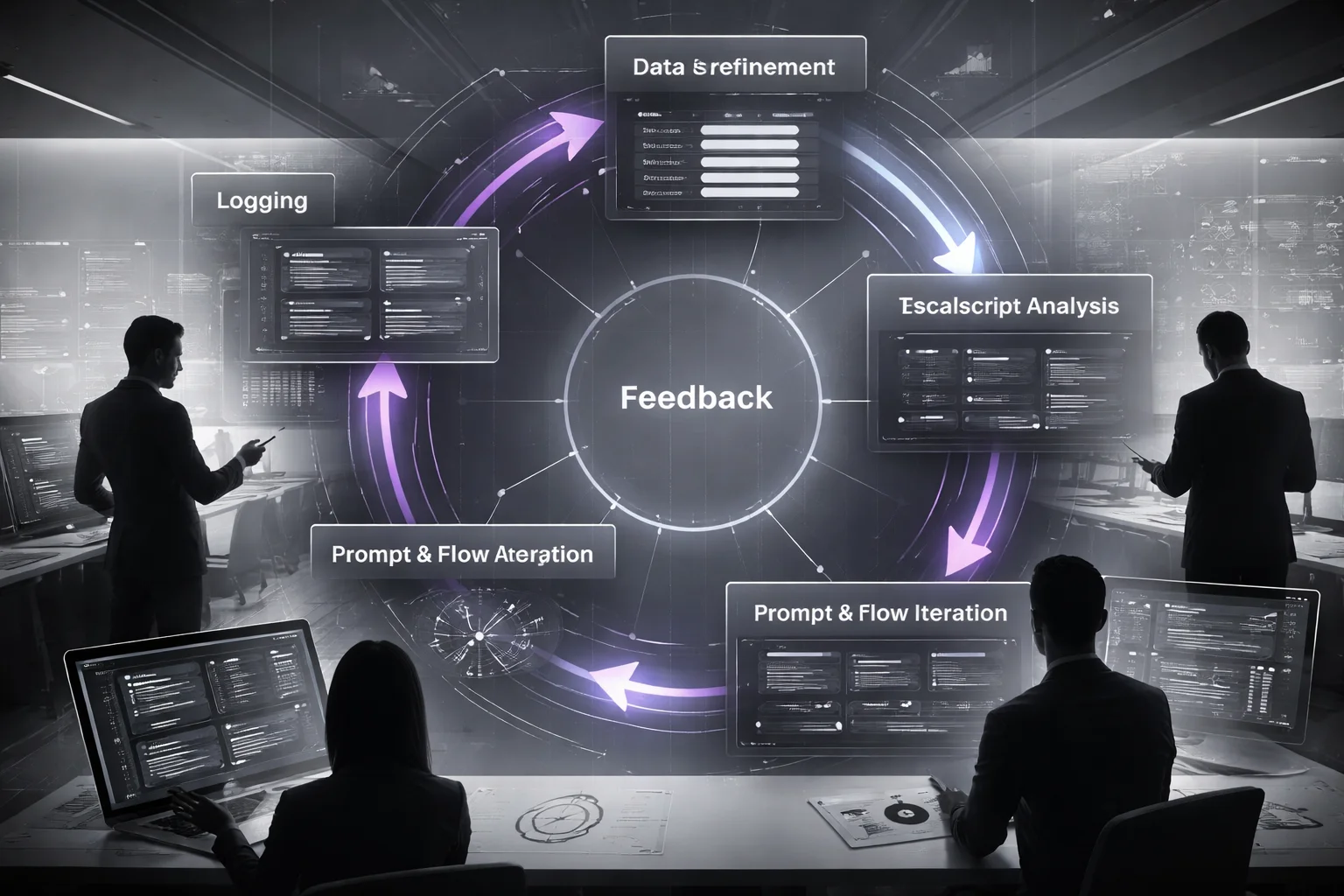

The refinement loop that actually speeds up AI voice bot implementation

Give one team ownership of the whole loop: transcript review, escalation analysis, prompt rewrite, flow adjustment, redeploy, QA test. One team. Clear names attached.

Weekly sounds organized. Early on, it's too slow.

The enemy isn't error by itself. It's delay between error and fix. In early AI voice bot implementation, high-frequency failures should move on near-daily iterations, with feedback coming straight from agents who handled transfers after the bot stumbled.

Your agents usually see confusion before your dashboards do. Ask them three things after takeover: what intent was missed, what phrase threw off the system, and what question would've clarified things faster.

A good AI phone bot pilot plan treats every early call as data and every piece of data as part of a tight refinement loop. That's how you implement AI phone bot systems without making customers pay for your learning curve.

If you want a solid operating model for this stage, this Implement Ai Phone Bot Human Centered guide gets one thing very right: refinement has to stay close to real human judgment. Faster improvement doesn't come from pretending the bot is smarter than it is. It comes from keeping mistakes small enough to survive — then fixing them before tomorrow's calls sound exactly like today's did. So why are so many teams still waiting a week?

Conventional vs optimized AI phone bot rollout outcomes

Tuesday morning, 8:12 a.m., somebody calls to change a pickup window. The bot hears “track my order,” sends them down the wrong path, and by 8:14 they’re calling back already irritated, saying “representative” like they’re trying to break a curse. I’ve heard calls like that right after teams posted cheerful containment numbers in Slack.

That’s how this usually goes wrong. Not with some dramatic system crash. With a dashboard that looks healthy for a few weeks while the actual customer experience starts rotting underneath it.

A 2024 Makebot.ai finding cited in search results said 70% of AI rollouts fail because companies mishandle reskilling or change. I think that number should bother more people than it does. Most phone bot programs don’t fail because the voice AI is hopeless. They fail because the company rolls them out like basic IT plumbing, then acts surprised when trust drops faster than cost per call.

The choice isn’t automation versus no automation. It’s volume-first exposure versus risk-managed sequencing.

Fast Company pointed at the same problem: companies keep treating AI like a standard IT deployment even though scaling it across an organization needs a different playbook. You can hear the difference faster than you can measure it.

Customer perception

Start in the busiest queue and customers meet your least-prepared bot in the worst possible conditions. ASR misses stand out. TTS pacing sounds strange. NLU mistakes pile up where everybody notices them. A caller asks for one thing, gets routed somewhere else, mashes zero, hangs up, calls back annoyed. That first impression sticks longer than project teams want to admit.

Start smaller and the exact same voice AI sounds far more competent. Intake works. Triage works. After-hours capture works. In those moments, a follow-up question doesn’t feel clueless. It feels normal.

I’d argue this is where most teams blow it: they expose the bot to maximum traffic before it has earned any trust at all.

Containment quality

This is where dashboards lie to your face.

Volume-first launches often report strong containment because calls stayed inside the system. Fine. Listen to 25 recordings and the story changes fast. People repeat themselves twice. They take the wrong option just to get somewhere. They give up and call back later from the parking lot or their office line. That’s not success. That’s fake containment with decent formatting.

Risk-managed sequencing usually gives you lower early containment numbers, but they mean something real. You find where ASR breaks. You see where NLU confuses intents. You catch TTS wording that creates friction before broad conversational IVR automation hits prime time.

Lower but honest beats higher but fictional every time.

Agent workload

The roughest transfers show up after volume-first launches. Agents pick up callers who are already irritated, already skeptical of the channel, already tired of repeating themselves. Then the bot summary is thin or wrong, so the agent has to restart from scratch while handle time climbs and leadership keeps saying the rollout is on track.

Risk-managed sequencing doesn’t stop escalations. It makes them readable. Agents still get handoffs, but now you can inspect missed-intent patterns inside your AI call center chatbot, tighten dialog logic, and fix what’s actually broken without dumping a wave of angry transfers onto the floor.

Time-to-confidence

Volume-first gives you visibility fast and confidence late.

Risk-managed sequencing gives you less visibility at first, then real confidence sooner because each failure is easier to diagnose during AI voice bot implementation. A small queue tells you what happened. A giant queue just tells you something went wrong somewhere.

That’s why launches can look fine for a month and then feel messy everywhere else: teams confuse exposure with progress.

Big launch plans are seductive. Big queue coverage. Big internal announcement. Feels bold. Usually it’s just noisy.

If you’re picking an AI phone bot rollout strategy or building an AI phone bot pilot plan, don’t chase immediate scale. Earn it in lower-risk flows first, then expand once the signals are clean. This AI voice bot call center deployment strategy is a better model if you want fewer illusions and better outcomes. How much avoidable damage are you willing to accept just so you can say you launched faster?

Implementation planning for an AI phone bot pilot

80%.

That’s the number that sticks with me: according to a 2026 Chatbot.com report, 80% of sales and marketing leaders have already put AI chatbots into customer experience or are planning to. I think that stat makes a lot of teams weirdly overconfident. If everyone’s doing it, people assume the hard part is deciding which vendor to buy and what date to announce. It isn’t.

Picture something smaller. More annoying. More real. It’s 7:12 p.m. on a Tuesday. A clinic front desk is closed. The voicemail box is almost full. A parent is calling because her kid has a fever and she needs to move a Thursday appointment before the morning rush starts. That’s the moment your pilot gets judged. Not in the steering committee. Not in the deck. Right there, on a call that should’ve taken maybe 90 seconds.

Most teams plan these pilots backwards. Scope, timeline, vendor, launch date. Nice spreadsheet stuff. Then they wonder why the pilot spits out noise instead of anything useful.

The part I’d argue matters most usually gets buried in the middle: keep the first pilot painfully narrow. One caller journey. One owner. One set of rules. One learning loop.

Start with something you can actually score without debating it for three weeks. After-hours intake works. Appointment rescheduling works. Guided triage can work too, if you define it tightly enough that the bot isn’t freelancing its way through edge cases. What I wouldn’t do first is broad conversational IVR automation across a main service line. That’s how you invite every strange caller behavior into week one and ruin your read on performance before you’ve learned anything clean.

And ownership can't be fuzzy. Not “cross-functional support.” Not “shared accountability.” I disagree with the way companies romanticize shared ownership here, because I’ve seen how it plays out at 8:03 a.m. when voice AI starts drifting, transfers get awkward, QA flags a pattern, operations blames product, product blames tuning, and nobody decides whether to pause the flow. Put one person in charge end to end across operations, product, and QA. One name.

Then draw lines early for what the conversational AI bot isn’t allowed to do yet. No policy exceptions. No sensitive changes without confirmation. No bluffing through unusual situations just because the voice sounds sure of itself. Confident is cheap. Correct is harder.

The metrics should tell you how fast you’re learning, not how pretty the demo sounded. Watch speech recognition (ASR) accuracy by intent. Check natural language understanding (NLU) confusion rates. Log fallback frequency, transfer reasons, and caller completion quality. Teams get hypnotized by text-to-speech (TTS). Fine, make it sound decent. But unless the voice is truly painful, it usually matters less than people think. I’ve seen polished voices paired with awful intent routing, and callers hated those bots anyway.

The timing doesn’t need to be dramatic either: 30 days to establish a baseline, 30 days to fix what’s breaking, 30 days to prove you can repeat the result in an adjacent queue. Ninety days is enough to learn something real without pretending version one should handle everything.

If you want a practical operating model for what comes after the pilot, this AI voice bot call center deployment strategy is a good next step.

So that’s the move: shrink the pilot until the signal is obvious, give one person actual authority, set hard boundaries, and measure whether callers complete the job cleanly. If 80% of leaders are already moving this direction, do you really want your first test to teach you nothing?

The question worth sitting with

To implement AI phone bot systems well, you don’t start with the biggest queue, you start where the bot can learn fast, recover safely, and earn trust before it earns scale.

So pick one narrow use case, wire in the basics that actually matter, like CRM integration, human handoff escalation, QA testing, and fallback and recovery flows, then measure containment, error rate, and customer friction every week. Actually, that’s not quite right. The real issue is sequencing your AI voice bot implementation around learning quality, not call volume, because a bad early rollout poisons confidence long before the model improves.

And watch your operating model as closely as your speech recognition (ASR) and NLU, because most teams don’t fail on voice AI alone, they fail on change management, ownership, and guardrails and compliance.

If your pilot is designed to prove the bot can talk, instead of proving your business can absorb and improve it, what exactly are you scaling?

FAQ: Implement AI Phone Bot

What does it mean to implement an AI phone bot?

To implement AI phone bot systems, you're not just turning on a voice assistant. You're putting together a working stack that includes speech recognition (ASR), natural language understanding (NLU), text-to-speech (TTS), dialog management, and the integrations that let the bot actually do useful work. According to Nextiva, an AI phone system handles the full lifecycle of a call, which is why setup matters more than the demo.

How do you implement an AI phone bot without increasing risk?

You start with narrow use cases, clear guardrails and compliance rules, and a fast human handoff escalation path. That means limiting what the bot can say, defining fallback and recovery flows, and routing edge cases to agents before the conversation goes sideways. Actually, that's not quite right. The real issue is unmanaged change, because Makebot.ai cited 2024 research showing 70% of AI rollouts fail from poor reskilling and change management, not just bad tech.

Why do most AI phone bot rollouts fail early?

Most teams treat AI voice bot implementation like a normal software launch, and that's where things break. Fast Company pointed out that scaling AI needs a different operating model, with transcript review, QA testing, retraining, and tighter business ownership. If you skip that loop, intent classification drifts, fallback rates rise, and trust disappears fast.

Can an AI phone bot integrate with an existing IVR and call routing system?

Yes, and it usually should. A good conversational IVR automation setup can sit in front of, beside, or inside your current call routing logic, then pass calls based on intent, account status, language, or urgency. The important part is call routing integration that preserves queue rules, reporting, and agent context instead of blowing up what already works.

Does an AI phone bot require a pilot before full rollout?

Almost always, yes. An AI phone bot pilot plan lets you test one or two high-volume, low-risk intents, check containment, and find failure patterns before you expose the bot to billing disputes, cancellations, or regulated conversations. That's the part people rush past, even though quick pilot wins usually decide whether the broader AI phone bot rollout strategy gets funding.

How do you measure success for an AI phone bot in a call center?

Start with a small KPI set: containment rate, deflection rate, average handle time, transfer rate, fallback rate, and customer satisfaction. Then tie those numbers to business outcomes like cost per resolved call and agent time returned to complex cases. According to Nextiva, customer support held 42.4% of the chatbot market in 2024, which tells you where measurement discipline matters most.

What guardrails should be added to prevent unsafe or incorrect AI phone bot responses?

Set hard boundaries on topics, approved responses, authentication steps, and when the bot must stop and transfer. You also want policy checks for regulated language, confidence thresholds for intent classification, and transcript monitoring for quality assurance (QA) testing. If the bot isn't sure, it shouldn't guess. It should recover, clarify, or hand off.

What integration steps are required to connect an AI phone bot to CRM and ticketing systems?

You need the bot to identify the caller, fetch the right record, write call outcomes back to the CRM, and open or update tickets without breaking your existing workflows. In practice, that means mapping intents to actions, setting permissions, defining data fields, and testing failure states like duplicate records or missing customer IDs. CRM integration and ticketing system integration are where a promising demo turns into an AI call center chatbot that your team can actually trust.