AI Voice Bot Development for Natural Conversation

Most AI voice bots don't fail because the models are bad. They fail because teams build for demos, not for conversation. I've seen smart engineers ship...

Most AI voice bots don't fail because the models are bad. They fail because teams build for demos, not for conversation.

I've seen smart engineers ship polished prototypes that collapsed the second a caller interrupted, mumbled, changed their mind, or just sounded human. That's the real story behind AI voice bot development: not bigger models, but better turn-taking, tighter latency, cleaner state handling, and a brutal obsession with how people actually speak.

This article breaks that down in 7 parts. You'll see where natural conversation really comes from, what the architecture has to do in real time, and why most voice teams measure the wrong things.

What AI Voice Bot Development Really Means

82%. That’s the share of companies that have already integrated voice assistant technology into operations, according to Master of Code. I’ll be honest: that number makes me flinch a little, because a lot of those companies probably think they built a voice system when what they really bought was a pile of parts with good branding.

I’ve watched this happen up close. One team rolled out a voice pilot that looked sharp in the demo room. Speech recognition? Fine. Synthetic voice? Smooth enough to impress executives. Intent detection? Passed all the scripted tests. Everyone gave that quiet little nod like the hard part was over.

It wasn’t.

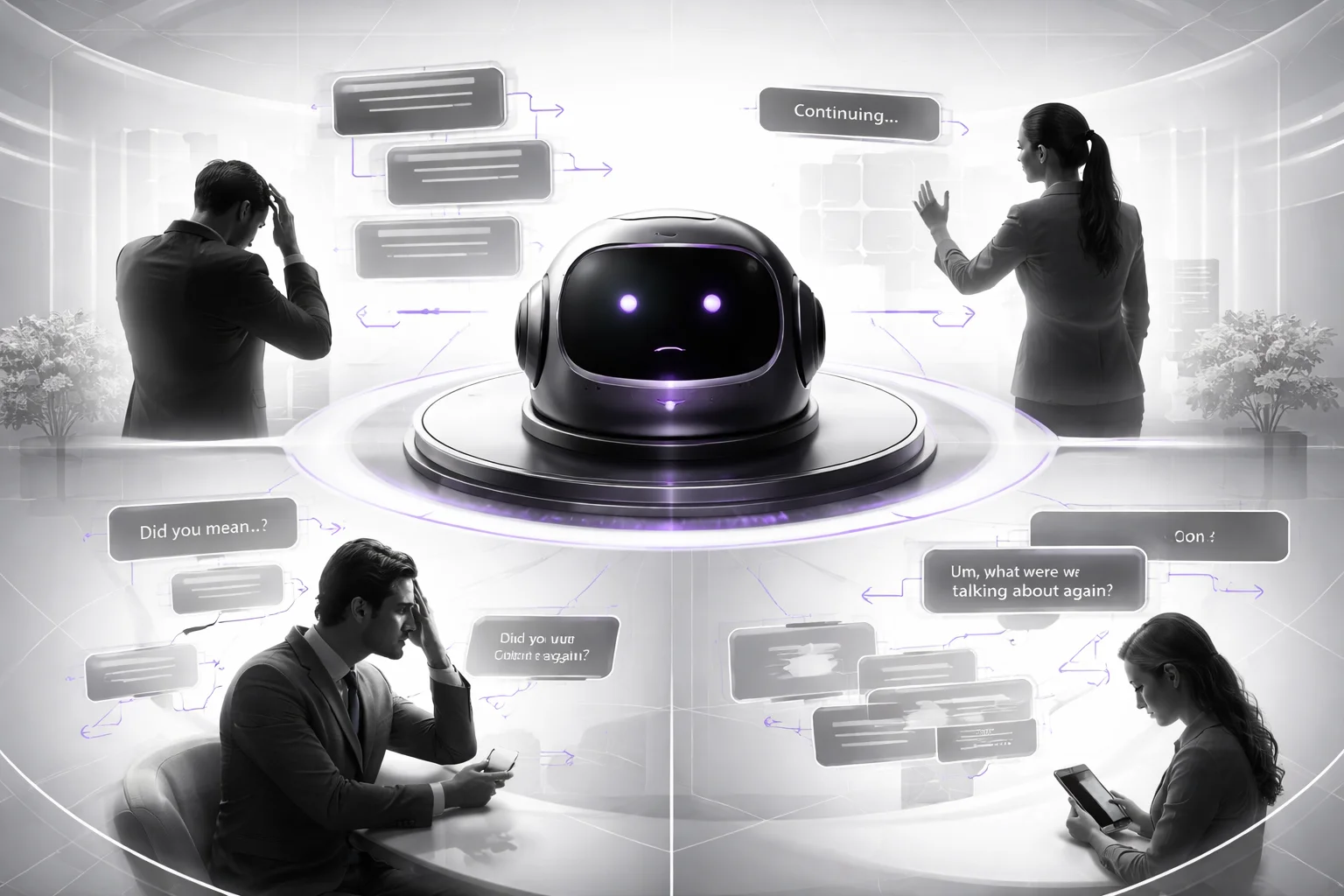

The first real callers started doing normal human stuff, and the whole thing got weird fast. People interrupted it halfway through a sentence. They changed their mind in the middle of an answer. They said things like, “Wait, what do you mean?” One caller paused for maybe 800 milliseconds to think, and the bot jumped in like an overeager intern on day one. Another corrected themselves, and the system latched onto the first answer anyway.

The team blamed the model. I think that’s usually the comforting lie.

The real problem sat in the middle, where most teams don’t want to look too closely: dialogue management, latency, turn-taking, and conversation state management. That’s what decides whether a bot feels usable or feels like arguing with a vending machine that somehow got funding.

AI voice bot development is building something that can survive live conversation under pressure, not just convert speech to text and text back to speech.

That sounds obvious until you see how many projects are really just STT, TTS, and an NLP layer wired together with optimism. Pick your vendors. Put recognizable logos on an architecture slide. Hope conversation appears on its own. It won’t.

I’d review a voice bot by looking at three things, and I wouldn’t start with vendor selection.

Timing. The system has to process streaming audio while it’s still listening. Real-time voice bot architecture lives or dies here. A pause might mean “I’m done.” It might mean “I’m thinking.” If your bot can’t tell which one happened, users notice instantly.

Interruption. A strong barge-in voice bot doesn’t just detect overlap. Demo systems fake that all the time. The hard part is deciding whether to stop speaking, what context to hold onto, and how to resume without sounding lost or rude.

Memory. Session state tracking has to survive corrections, clarifications, reversals, and messy phrasing from actual people. “No, not Tuesday — sorry — Thursday morning” can’t trigger some quiet internal collapse.

That’s why two demos can use the same speech-to-text engine, the same text-to-speech provider, and roughly the same NLP stack, yet one feels smooth and the other feels broken in a way nobody can quite explain in the meeting after.

The market has already moved past treating voice like a standalone IVR replacement. Chatbot.com points out that voice-first systems are moving toward multimodal setups where text, images, and document analysis all show up in the same experience. So your bot probably won’t live alone anyway. It’ll sit inside a bigger customer journey with handoffs, follow-ups, screens, messages, and maybe uploaded PDFs if you’re working in insurance or healthcare.

The money tells the same story. Allied Market Research projects conversational AI will reach $32.62 billion by 2030. That market doesn’t grow because STT and TTS exist. Those have existed for years. It grows because companies need systems that can stay coherent while people interrupt themselves, rethink answers, ask for clarification, and generally act like humans instead of test scripts.

A side note people keep underrating: natural speech evaluation. If your scorecard stops at word error rate and task completion, you’re grading the wrong thing. Users usually don’t care about your benchmark sheet first. They care whether the bot knows when to speak, when to wait, and when to get out of the way.

If you’re still trying to decide whether voice belongs in your product at all, this voice bot vs chatbot decision matrix will help more than another polished vendor walkthrough. So before you buy another stack or approve another pilot, ask yourself: are you building a conversational system—or just assembling audio components and hoping nobody notices?

Why Most Voice Bots Feel Robotic

Nearly 2/3 of consumers want more voice-based exchanges with AI and chatbots. That number from Master of Code always gets me, because it kills the lazy excuse right away. People aren't rejecting voice bots on principle. They're willing to use them. They just don't forgive a bad call.

I watched that happen on a support line. Seven words did the damage: “No, not that order, the other one.” The bot hesitated, cut its own audio a fraction too late, lost the earlier reference, and came back with a clarification question no decent human agent would've asked. That's not some spooky synthetic-voice issue. That's a system failing at conversation.

Everybody loves blaming the voice. Weird cadence. Flat delivery. Slightly uncanny timing. Sure, STT matters. TTS matters too. Automatic speech recognition has gotten much better, and Master of Code isn't talking about theory here — businesses are already using voice assistants for transcription, translation, and speech workflows inside actual operations. The raw audio layer isn't the part that should still be embarrassing teams in 2025.

The break happens after the sound becomes text.

A lot of teams are still shipping voice flows like it's 2016 IVR logic in a nicer outfit: prompt, wait, parse, respond, repeat. One turn at a time. One clean answer per prompt. A user who politely follows instructions like they're building a bookshelf from IKEA and has all afternoon.

Real callers are messier than that. They interrupt. They answer two questions at once. They change their mind halfway through the sentence. They say yes and no back-to-back. They correct one detail without retelling the whole story because they assume your system was paying attention the first time.

Usually, it wasn't.

A bad barge-in voice bot is brutal because you can hear that it's technically listening while functionally ignoring you. I'd argue that's worse than a flat synthetic voice. Half-listening is what really breaks trust — the bot stops speaking just late enough to feel clumsy, drops session memory, then asks an awkward follow-up that exposes weak conversation state management. I've seen this sink calls in under 90 seconds.

That's why so many conversational AI voice agents look polished in demos and then collapse in production. The real-time voice bot architecture can't keep up with interruptions. The NLP layer catches intent but misses correction context. The clarifying question makes sense if you're staring at a parser output and no sense at all if you're an actual person trying to fix an order mistake before lunch.

Customers catch it fast.

Demand isn't the bottleneck here. Trust is. Master of Code says people want more of these interactions, not fewer. One slow, rigid, forgetful call can poison the whole channel for that customer. I've seen teams spend six months tuning prompts and swapping voices while the real problem was state falling apart after interruption number two.

The cost shows up where operations teams actually feel pain: low containment, more handoffs to human agents, weak adoption in channels that were supposed to absorb support volume instead of pushing even more pressure onto it.

The market isn't slowing down to let anyone figure this out later. Allied Market Research puts conversational AI on a 20.0% CAGR through 2030. That's a lot of money rushing toward systems that still forget what "the other one" refers to.

I'd start with stress testing turn-taking instead of obsessing over happy-path completion rates. Interruptions aren't edge cases; they're normal behavior. Treat memory like infrastructure, not polish — if context disappears during a correction, the bot will sound robotic no matter how smooth the voice is. Judge quality by something harsher than task completion too. Use natural speech evaluation. If the exchange feels stiff, slow, or oblivious, users will call it broken even when your dashboard reports success.

The missing piece in AI voice bot development isn't better voices alone. It's systems that can survive messy turn-taking and hold state under pressure.

If you're rolling one out inside support operations, read this Ai Voice Bot Call Center Deployment Strategy before you spend money learning these mistakes the hard way.

Real-Time Conversation Architecture for Voice Bots

Why does a voice bot that says mostly correct things still feel awful to use?

I’ve watched teams blame the model for that. New prompt. New classifier. New vendor. Same ugly call. The caller asks to reschedule a delivery while driving, gets hit with two seconds of silence, and suddenly nobody cares how clever your intent system is.

Voice gets judged harder than chat for a simple reason: people use it with their hands full and their attention split. RipenApps calls out the rise of voice-first use in moments like multitasking and driving. Yeah. Exactly there. On I-95, mid-merge, two seconds of dead air doesn’t read like “minor latency issue.” It reads like failure.

That’s the answer. Timing.

But not timing by itself. I’d argue the real story is architecture that behaves like it’s impatient. Streaming speech-to-text (STT) can’t wait politely for the speaker to finish; it has to emit partial hypotheses as the sentence is still coming in. Incremental natural language processing (NLP) has to chew on fragments right away, score likely intent, pull entities, and guess next actions before the user lands the final word. Then a low-latency orchestrator decides what happens next: wait, clarify, prefetch account data, or get audio ready. Text-to-speech (TTS) should start as soon as the response is stable enough to say aloud.

Not perfect. Stable enough.

That distinction matters more than people want to admit. A lot of teams treat certainty like the goal line. I don’t buy that. In production, responsiveness wins first. Good voice agents manage risk while the conversation keeps moving; they don’t sit there hoping for 100% confidence like they’re waiting for divine approval.

The part people bury — and then pay for later — is state control. You need dialogue management built with finite state machines or event-driven state charts, plus session memory that tracks slot values, earlier confirmations, interruptions, and unresolved branches. Miss that piece and your bot might notice an interruption without knowing how to recover from it. It hears the problem and still fumbles the handoff.

A barge-in voice bot exposes this fast. While TTS is talking, the system still has to listen to streaming audio for interruption signals and turn-taking cues. Caller says, “No, the billing account,” halfway through playback? The bot has to cut speech immediately, preserve context, update conversation state management, and continue from the corrected branch instead of trudging back to square one like nothing happened. I’ve seen systems miss this by 400 milliseconds, and even that tiny delay makes them sound weirdly stubborn.

This isn’t some research demo party trick anymore. Master of Code reports that 71% of business and technology professionals say their organizations have invested in bots for CX support. NextLevel.AI projects the voice assistant market will reach $33.74 billion by 2030, up from $7.35 billion in 2024. Big money, big ambition, same old problem: polished demos hide timing failures until real callers interrupt, correct themselves, mumble, change topics, and wreck the neat little script.

If you’re building for actual production calls instead of investor theater, this Ai Voice Bot Call Center Deployment Strategy is a better place to look than another glossy prototype reel.

The weird part is users will often forgive an occasional automatic speech recognition miss. They won’t forgive bad pacing. So if “natural” is your scorecard, you’re not only judging language quality — you’re judging whether the system knows when to speak, when to stop, and how to recover without sounding lost. If that’s true, why are so many teams still shopping for a smarter model before they fix turn-taking?

How to Handle Interruptions, Barge-In, and Clarifications

Everybody says it: “Just turn on barge-in.”

That advice shows up in decks, vendor demos, and product meetings like it's the whole answer. It isn't. It's the checkbox version of conversation design.

A voice bot that interrupts itself because it heard a little breath noise isn't being responsive. It's malfunctioning. A bot that keeps reading the script after someone says “wait,” “no,” or “agent” feels just as bad in the other direction. I've heard support lines do this three times in one call, and by about the third collision you can feel the caller reaching for the hang-up button.

People talk about detection like that's the hard part. I'd argue that's outdated thinking. Detection matters, sure. But the piece that actually decides whether the call feels sane is policy: what an interruption means, what gets paused, what stays active, what gets confirmed, what gets ignored.

That's where conversation state management either proves it's real or exposes itself as two disconnected systems — one that hears audio and another that blindly pushes prompts.

Inside a real-time voice bot architecture, I’d separate interruptions into three buckets: hard interrupt, soft interrupt, and ignore. Hard interrupt means kill text-to-speech (TTS) right now — “Stop, that's wrong,” “Agent,” “Different address.” Soft interrupt is overlap like “yeah” or “okay,” where the bot usually keeps going unless confidence climbs. Ignore is coughs, hold music bleed, cross-talk from another person in the room, or low-confidence junk from automatic speech recognition.

The thresholds have to be explicit. Actual numbers tied to behavior. Not gut feel from a staging demo. If speech-to-text (STT) comes back with high-confidence corrective language like “no,” “wait,” or “different address,” playback should pause immediately and the active slot should stay intact. If the result lands in that messy middle — say 0.58 confidence on a partial correction — finish the current phrase and ask one tight follow-up: “I heard an interruption. Did you want to correct the address?” That's a lot better than the old failure mode where the whole flow snaps back to step one like nothing happened.

Dialogue management is where this gets won or lost.

If a caller says, “No, Tuesday morning... actually afternoon,” a good system doesn't treat that like an error dump. It treats it like an event inside an active appointment intent. The intent stays alive. One entity changes. Only that changed field gets confirmed. Then the call keeps moving. That's what makes conversational AI voice agents feel steady instead of flimsy.

Voiceflow gets the foundation right: NLP, voice recognition, automatic speech recognition, and TTS all matter. True. Still not enough. A clean stack doesn't save bad flow control any more than a powerful engine saves bad brakes.

The timing on this matters because user expectations aren't waiting around for your roadmap. Master of Code reported that 80% of firms were using or planning AI for customer service improvement by 2026. NextLevel.AI says U.S. voice assistant users will hit 157.1 million by 2026. More adoption doesn't buy forgiveness. It does the opposite.

No caller cares that your vendor crushed intent detection on a quiet internal test set from March 2025. They care whether interruptions and clarifications hold up during natural speech evaluation, with overlap, self-corrections, background chatter, impatience — all the stuff demos conveniently avoid.

If you're building for service teams, our Ai Voice Bot Call Center Deployment Strategy breaks down where these policies usually crack first.

- Barge-in rule: stop instantly only for high-confidence corrections, cancellations, and escalation requests.

- Clarification rule: ask about one uncertain field at a time.

- Recovery rule: resume from the interrupted step, not from the beginning.

- Evaluation rule: test overlapping speech and self-corrections just as aggressively as happy-path intent detection.

If your bot can hear someone but can't tell whether they meant “stop,” “yes,” or just breathed near the mic, do you really have barge-in — or just a feature flag?

Conversation State Management Patterns That Work

Late in 2024, I watched a contact-center pilot go sideways on a call that should've been easy. The caller verified her identity, asked for a balance, paused because a dog was losing its mind in the background, came back, corrected one digit, then said she actually wanted to dispute a charge from last Friday. The bot had the whole transcript. Every word. And it still started guessing.

That's the part people get wrong.

Teams love blaming the model first. Bad reasoning. Weak prompting. Not enough examples. Maybe switch vendors. I've seen people keep cramming more transcript into the prompt like they're trying to shut an overstuffed suitcase with both hands and one knee. They call it memory. I don't. It's panic storage.

A real support line doesn't move in a straight line, and your system can't pretend it does. If durable facts live inside prompt history as one giant blob of recent text, your conversational AI voice agents will drift the minute a caller changes course.

The fix isn't glamorous. Durable state belongs in an orchestration layer. Not buried in token soup. Not held together by hope.

I've also seen the opposite mistake, and I'd argue it's just as bad: teams lock everything into a rigid scripted flow because it's easy to test and easy to explain in a slide deck. Sure, it's predictable. Right up until a real person goes off-path and the whole thing snaps. A pure LLM-driven flow sounds smooth for 20 seconds and then wanders off. The only thing I've seen hold up in production is the middle: event-driven orchestration with bounded memory.

Here's what that split actually looks like in conversation state management. Persistent session state keeps what must survive across turns: customer ID, selected account, prior confirmation, escalation status, consent flags, handoff markers. Short dialog state keeps what only matters right now: the current question, the slot being corrected, the latest unresolved ambiguity, recent turns from speech-to-text (STT), active repair attempts. That's how you stop dragging stale context through every exchange and confusing dialogue management.

Interruptions make this uglier than most architecture diagrams admit. They don't pause conversations neatly. They scramble them. In a real-time voice bot architecture, if someone says, “Check my balance... actually I need to dispute a charge,” you can't overwrite path A with path B and act like nothing happened. You suspend one task, open another, and keep both visible.

And no, intent score alone won't save you.

Priority beats intent confidence all the time. A dispute flow usually outranks an informational flow, so your orchestrator needs business rules on top of whatever confidence score came back from natural language processing (NLP). If your system hears “balance” with 0.91 confidence and “fraud” with 0.74, but company policy says disputes jump the line, then disputes jump the line. That's not fancy AI thinking. That's basic operations.

The market's getting too expensive for sloppy state handling anyway. Master of Code says 64% of leaders plan to increase investment in conversational AI chatbots in 2026. ASAPP Studio puts the North America market at $15 billion to $18 billion in 2026. Enterprise Bot calls out context preservation, intent recognition, sentiment analysis, and flow control as core pieces of natural conversation. Those aren't bonus features anymore.

The practical version is simpler than people expect:

- Persist: identity, verified entities, consent flags, handoff markers.

- Window: recent turns from speech-to-text (STT), current slot focus, active repair attempts.

- Suspend: interrupted tasks instead of deleting them in a barge-in voice bot.

- Escalate: after repeated low-confidence automatic speech recognition failures, policy triggers, or sentiment drop.

- Replay cleanly: pass summarized state to agents or downstream systems instead of raw transcript dumps from STT and text-to-speech (TTS).

That replay piece matters more than people think. In that late-2024 rollout I mentioned, agents got a six-line state summary instead of full transcripts that ran 40 messy turns packed with false starts and ASR noise. The summary won because agents could act immediately instead of hunting through garbage for one useful fact.

If your deployment sits inside support operations, this Ai Voice Bot Call Center Deployment Strategy shows where these state models usually break first.

The weird truth is that good memory makes a bot sound more human by being less human. People forget details constantly. Systems shouldn't. So why are so many teams still treating prompt history like a database?

Evaluation Criteria for Natural Voice Interaction

I watched a launch go sideways because we celebrated the wrong number. The dashboard looked clean. Task success was up. People clapped. Then the real calls hit and callers did what callers always do: they interrupted, changed their minds mid-sentence, said “no, tomorrow” under their breath, and left just enough silence to make the bot sound lost. Nothing technically broke. That was the problem. It kept going like it understood.

I’ve seen this happen with teams that had gorgeous demos and studio-audio test flows. Put that same bot on a Monday morning call queue with 200 live callers and the cracks show fast.

That’s the lesson: don’t grade a voice bot on whether it behaves in a perfect script. Grade it on whether it survives messy human behavior without making the caller do extra work.

Hyperleap AI puts a number on the mess: 13% of AI chatbot conversations still get escalated to humans even in optimized deployments. I think that stat matters because it kills the fantasy that “mostly works” means natural. A natural system doesn’t just complete the happy path. It handles interruptions, corrections, hesitation, and weird phrasing without falling apart.

The framework I’d use has five checks. Start with the one that embarrasses teams fastest: interruptions. In a barge-in voice bot, the question isn’t whether speech recognition heard every word. The question is whether the system stops talking at the right moment, keeps context, and actually follows the correction. Real call shape: “No, not checking, savings.” Your speech-to-text can nail all four words and you can still fail if the dialogue manager keeps marching toward checking anyway.

Response latency tells you whether the thing feels alive. I’m not talking about average backend timings buried in an engineering report nobody reads after launch. I mean the gap a caller feels between finishing their sentence and hearing the reply begin. That’s where real-time voice bot architecture earns its keep or exposes itself. Around 700 milliseconds, people usually stay comfortable. Push beyond 1.5 seconds too often and you’ll hear “hello?” because they assume nobody caught it.

Repair success is the sneaky one. Teams obsess over intent accuracy on turn one because it looks neat in a slide deck. Real calls aren’t neat. People say “sorry,” “that’s wrong,” “I meant tomorrow,” and expect recovery without drama. Research cited by Digital Watch Observatory says AI still misses social signals like timing, phrasing, openings, and closings. That’s exactly why a correct label on the first turn doesn’t tell you much if the bot collapses the moment someone repairs themselves.

Task completion still matters. So does user effort. I’d argue user effort is where the truth lives and task completion is where teams hide. A caller can finish the task and still have a lousy experience if they had to repeat an account number twice, restate their goal three times, and sit through two clarification loops caused by bad conversation state management. Fancy TTS won’t save that. Doesn’t matter if your voice sounds like NPR.

- Interruption success rate: Did the bot stop immediately, retain context, and continue on the corrected path?

- Response latency: Did replies start fast enough to feel live, ideally around 700 ms instead of drifting past 1.5 seconds?

- Repair success: Did “sorry,” “that’s wrong,” or “I meant tomorrow” get resolved cleanly or spiral into more confusion?

- Task completion: Did the caller finish without getting handed off to a human?

- User effort: How many extra turns, repeats, prompts, restarts, and clarification loops did the system force?

If you want something practical before rollout, this Ai Voice Bot Call Center Deployment Strategy will help more than another polished demo with cooperative users and perfect audio.

Use this tomorrow on your AI voice bot development work. Throw interruptions at it. Self-corrections. Mumbling. Mid-sentence changes. Dead air. Score every call hard against those five measures. Because if your conversational AI voice agents only sound good when users behave nicely, they’re not conversational. They’re scripted.

Designing a Voice Bot Roadmap for Production

Why do so many voice bots sound impressive on Thursday and feel broken by Monday?

I’ve seen the movie. Somebody gets a clean demo working for account support, everybody in the room nods, and by lunch the conversation has already jumped to rollout plans, new channels, multilingual support, analytics dashboards, maybe even a cheerful synthetic voice with better “brand alignment.” Feels productive. Usually isn’t.

Then live callers show up and ruin the fantasy in about 15 seconds. They interrupt. They answer the second question first. They ask for help before authentication is done. They change “next Thursday” to “Friday morning” halfway through the sentence, then cough right into the mic. The bot had speech-to-text, text-to-speech, NLP, automatic speech recognition, all the shiny parts. Still collapsed.

Cathy Pearl said it better than most: “Design for how people actually talk, not how you want them to talk.” I think that line should be taped to every product manager’s laptop because it’s not really design advice. It’s a warning. And it points to the real problem: not bad models. Ambition. Too much of it, too early.

The roadmap should be boring. Four stages. Small scope. Tight guardrails. A lot of saying no. That’s the answer. But here’s the annoying part: “boring” doesn’t mean easy.

1) Discovery

Start with one job so specific it almost sounds underwhelming. Not “customer service.” Not “support automation.” More like: reschedule appointments by phone where authentication is already handled. One channel. One outcome. One metric that tells you if this thing works or doesn’t.

This is where teams usually try to sound strategic and end up getting vague. Don’t. Map the ugly stuff instead: where callers interrupt, where they go silent for four seconds, where they ask “sorry, what was that?”, where they switch topics, where they dump three facts into one answer because that’s how humans talk when they’re in a hurry. Figure out whether your real-time voice bot architecture really needs barge-in behavior or whether guided turns will actually hold up better.

I’d argue this stage decides whether the bot should exist at that exact moment in the customer journey at all. If it shouldn’t, no amount of polishing saves it.

2) Prototype

Don’t chase coverage yet. Chase timing.

Run dialogue management and conversation state management against 20 to 30 ugly calls. Not polished scripts. Not internal roleplay with coworkers politely waiting their turn like they’re in a training video from 2016. Real messy exchanges.

You want calls where someone says, “No, next Thursday—wait, actually Friday morning,” while background noise kicks up and they correct themselves before your system has finished processing the first date. I once watched a team test only clean flows for two weeks and then get blindsided by a 1.8-second delay that made callers think the bot had frozen. That tiny pause wrecked more conversations than any intent-classification issue.

Measure latency. Measure repair success. Measure whether people can correct themselves without blowing up the exchange and starting over from scratch.

3) Pilot

Now let real users hit it. Just don’t get cocky.

Keep traffic limited and put human fallback close enough that one bad interaction doesn’t turn into the story customers repeat for months. Read transcripts line by line for natural speech evaluation: overlap, pauses, abrupt topic shifts, those strange moments where a caller answers yesterday’s question instead of the current one because their brain is still catching up.

The metric I trust more than demo-room applause is repeat use. Hyperleap AI reports that 67% of customers who have a positive AI chatbot experience come back and use it again. That number matters because return behavior is harder to fake than stakeholder excitement.

4) Production hardening

Reliability isn’t separate from conversation design. It is conversation design.

Track turn latency, escalation triggers, STT confidence drops, TTS cutoffs, and state corruption after interruptions. Write runbooks before launch, not after your first rough weekend when support is already scrambling at 8:12 a.m. Stress-test handoffs until they stop being dramatic and start feeling routine.

If this is heading into support operations, Ai Voice Bot Call Center Deployment Strategy is a solid sanity check.

The weird part is that the best production roadmap often looks timid on paper. That bothers people because it doesn’t read like vision; it reads like restraint. Good. Restraint is what gives you a bot that survives ordinary human mess instead of just surviving a demo. And if your roadmap can’t survive an ordinary Tuesday call, what exactly did you build?

FAQ: AI Voice Bot Development for Natural Conversation

What is AI voice bot development?

AI voice bot development is the process of building systems that can listen, understand, decide, and respond in spoken conversation. In practice, that means combining speech-to-text (STT), natural language processing (NLP), dialogue management, and text-to-speech (TTS) into one real-time loop. The hard part isn't making a bot talk. It's making it respond fast enough, with enough context, that people don't feel like they're arguing with a phone tree.

Why do voice bots still sound robotic?

Most voice bots sound robotic because teams obsess over the voice itself and ignore timing, turn-taking, and context. Actually, that's not quite right. The real issue is that many bots miss subtle social cues like pauses, interruptions, clarifications, and natural phrasing, which makes even a good TTS voice feel fake. According to Digital Watch Observatory, AI still struggles with openings, closings, timing, and other human conversational signals.

How do real-time voice bot systems work?

A real-time voice bot architecture usually follows a streaming pipeline: audio input, automatic speech recognition, intent detection, dialogue management, response generation, and speech output. The best systems don't wait for the user to finish an entire monologue. They use streaming audio processing, turn-taking detection, and latency optimization so the bot can react in near real time instead of feeling one beat too late.

How do you handle interruptions and barge-in in a voice bot?

You need explicit barge-in handling, not wishful thinking. A barge-in voice bot should detect user speech while TTS is playing, stop or duck audio output, preserve session state, and decide whether the interruption is a correction, a clarification, or a topic shift. If you don't design for interruptions, your bot will talk over people, and users won't forgive that twice.

What is conversation state management in a voice bot?

Conversation state management is how your bot keeps track of what just happened, what the user already said, and what still needs to be resolved. That includes session state tracking, slot values, prior intents, confirmation status, and context window management across multi-turn exchanges. Without it, follow-up questions like “yes,” “the second one,” or “change it to tomorrow” fall apart fast.

Can AI voice bots understand clarifications and follow-up questions?

Yes, but only if you design for elliptical speech instead of pretending users speak in neat, complete sentences. People say things like “no, the other order” or “I meant next Friday,” and your system has to resolve that against recent context, entity extraction, and dialogue history. According to Enterprise Bot, natural conversation depends heavily on context preservation and conversation flow management.

How do you design a low-latency AI voice bot architecture for natural conversations?

Start by treating latency as a product feature, not an infrastructure footnote. Use streaming STT, fast intent routing, partial response generation, and low-latency TTS so the system can begin responding before every component finishes its full pass. If your pipeline adds too many handoffs, your conversational AI voice agents will feel hesitant even when the underlying models are accurate.

What are the best practices for barge-in and turn-taking in a voice agent?

Design for how people actually talk, not how your flowchart wishes they talked. That means supporting overlapping speech, short acknowledgments, self-corrections, silence thresholds, and interruption recovery without losing the thread of the task. As Cathy Pearl put it, “Design for how people actually talk, not how you want them to talk.”

How do you evaluate whether a voice bot sounds natural?

Natural speech evaluation should cover more than word accuracy. You should measure latency, interruption recovery, task completion, turn-taking quality, fallback rate, clarification success, and voice interaction quality metrics like whether users repeat themselves or abandon the session. A bot can have strong speech recognition and still feel awful if its pacing and dialogue management are off.

What does a production-ready voice bot roadmap include?

A real roadmap moves from narrow prototype to controlled pilot to monitored production, with clear checkpoints for data quality, failure handling, escalation, analytics, and model tuning. You also need testing for edge cases like noisy audio, ambiguous intent detection, barge-in handling, and interruptions and clarifications across channels. According to Master of Code, 82% of companies have integrated voice assistant technology into operations, so the bar isn't “can we demo it?” anymore. It's “can we run it without embarrassing ourselves?”

How do you monitor and improve an AI voice bot after launch?

Watch real conversations, not just dashboard averages. Track escalation rate, repeated intents, low-confidence utterances, failed entity extraction, silence drop-offs, and places where users interrupt the bot because it's too slow or off-topic. Then feed those patterns back into prompts, dialogue rules, training data, and conversation state management so the system gets less brittle over time.