AI Transformation Services: Stage It Right

Most AI programs fail for a boring reason: they try to scale before they can learn. That's the part vendors don't like saying out loud. AI transformation...

Most AI programs fail for a boring reason: they try to scale before they can learn. That's the part vendors don't like saying out loud. AI transformation services only work when they're staged, measured, and tied to how your business actually makes decisions, not when they're sold as a big-bang platform rollout.

The evidence is hard to ignore. According to a 2026 Harvard Business School Online summary of McKinsey data, 88% of organizations use AI in at least one function, but only 31% are scaling and just 7% are seeing value from widespread deployment. We'll get into why that gap exists, what a real AI transformation strategy looks like, and how to build it without lighting money on fire.

What AI Transformation Services Really Mean

Up to 90% fewer fraud cases.

That number came out of a 2026 Haposoft report on AI-based fraud detection, and honestly, I get why executives latch onto it. Read a stat like that in a board deck and suddenly everybody wants “AI transformation” by next quarter.

Here's the problem. Most companies aren't buying transformation. They're buying a model, a chatbot, or a pilot with a polished dashboard and a six-week rollout plan. I've watched teams celebrate the demo, approve the invoice, and then act surprised when the old process is still running in parallel 90 days later because nobody trusts the new system after two bad outputs.

Databricks says AI transformation means embedding AI into operations, products, and services. That's fine as far as definitions go. I think the real fight starts after the model appears, because that's where budgets get burned: decision rights, handoffs, approvals, exceptions, accountability. Not the flashy part. The expensive part.

A single use case doesn't fix this. Automating one task doesn't fix it either. A company can plug in a model, declare victory, and still end up with managers overriding outputs, staff keeping spreadsheets on the side “just in case,” and a tool that's technically live but practically ignored.

AI transformation services only change operations in a measurable way when four pieces move together: AI transformation strategy, workflow redesign, model governance, and user adoption. Miss one and the whole setup gets shaky fast.

Banking makes that painfully obvious. A fraud model can look excellent on paper and still fail in practice if alert routing is sloppy, analysts don't have clear review rules for edge cases, feedback never gets fed back into learning loops, or MLOps retraining isn't happening as fraud patterns shift. Picture a mid-size bank reviewing 40,000 flagged transactions a day. If the handoff logic is bad, all you've done is create cleaner chaos at higher speed.

ScienceDirect lands closer to reality here: staged AI implementation tends to work better because adoption happens in manageable steps across automation, augmentation, and data richness. That order matters more than people want to admit. Start with data readiness. Baseline performance before you touch anything. Redesign workflows around human judgment instead of forcing employees to contort themselves around a tool somebody bought at an offsite.

Then keep going. Adaptive systems beat frozen launch-day models every time because the world changes whether your vendor roadmap does or not.

This is why enterprise AI change management belongs inside the definition of transformation, not bolted on afterward like cleanup duty. If people don't trust outputs, if workflows can't absorb them, or if governance can't explain them, you haven't deployed intelligence. You've installed risk.

What should you do about it? Ask uglier questions before signing anything. Who changes their daily work? What gets retired? How are edge cases reviewed? Where does feedback go? Who owns retraining? If nobody can answer those clearly, you're not looking at transformation yet. You're looking at software with good branding.

Why Big-Bang AI Transformation Fails

40%. That's how many organizations were using AI across the business in 2026, according to Thomson Reuters, up from 22% in 2025. That jump is huge. Honestly, when I first saw it, my reaction wasn't "wow, progress." It was: that's a lot of companies trying to scale before they've earned the right to.

Because here's what usually happens. The model doesn't die first.

The chatbot still replies. The dashboard still loads during the board meeting. The Thursday pilot demo still gets applause, usually right after somebody says they're "moving fast" over a slide with four colored arrows and somehow no actual owner listed anywhere.

I've watched this play out in a way that's almost boring now. Week one feels sharp. Week six doesn't. Customer service wants call summaries. Finance wants risk flags. Sales ops wants forecasting help. One shared system, three different demands, three different definitions of success, and suddenly you've got something that's being treated like separate products without being built like separate products.

The word for that is dilution.

Not sabotage. Not some dramatic model collapse. Dilution. Attention gets spread across too many workflows, too many teams, too many systems, and the learning rate falls apart while failure points multiply. I think this is where most big-bang programs actually die: slow-motion confusion, where everybody touched the thing and nobody owns it.

The ugly part isn't even flashy enough to make the postmortem deck. Legal asks about model governance after procurement has already signed the contract. Operations finally checks the data and finds missing fields, bad labels, and no production-grade baselines. I saw a team once discover halfway through rollout that nearly 18% of their historical support tickets had category labels applied differently by two regions, which meant their "smart routing" pilot was learning from contradictions. That's how a program sold as transformation turns into exception handling with better branding.

And no, going smaller doesn't magically save you if the foundation is rotten.

A narrow rollout still fails if your data's shaky and your change plan is basically "the teams will adapt." They won't. Or they'll adapt in all the wrong ways — side spreadsheets, manual workarounds, quiet distrust, managers telling people to double-check every output but never changing the workflow itself.

IBM Think has been pretty direct about the real bottleneck: AI can improve productivity and open new business models, but scaling it takes serious data and compute capacity. Executives keep trying to skip that part because groundwork is dull and kickoff meetings are fun. Scale isn't a decision you announce in Q2. It's something you earn after doing the annoying work nobody wants to clap for.

The appetite is real, sure. Haposoft said in its 2026 report that 74% of companies already see AI as a growth driver. I don't doubt that for a second. But believing AI matters isn't an AI transformation strategy. It's a mood. A budget line if you're lucky.

- Over-scoping: one launch wave tries to remake customer service, finance, and sales ops before anyone has built even basic AI learning loops.

- Weak data readiness: no lineage, poor labeling, fragmented systems, and no dependable baseline for performance before launch.

- Thin change management: no role redesign, no workflow training, no clear escalation path when bad outputs show up at 2:17 p.m. on a Tuesday and someone needs an answer right then.

- MLOps treated like cleanup: models go live without continuous learning plans or clear operational ownership.

The version that works is less exciting on slides and better everywhere else. Start with one workflow. Gather evidence. Prove it can survive contact with real users before asking five departments to reorganize around it. That's why AI transformation consulting for organizational change matters more than another shiny demo stack; staged AI implementation gives adaptive AI systems time to learn before the organization has to absorb the blast radius.

Going slower at the start is often how serious companies get to production faster. So if your plan touches everything by Q3 — what exactly do you think breaks second?

The Staged AI Transformation Framework

Everybody says the same thing about AI transformation now: move fast, scale early, get a few wins on the board, show momentum to the exec team, and figure out the operating model later. Sounds modern. Sounds aggressive. Usually it's how you end up with six months of demos, a shiny chatbot nobody trusts, and a cleanup bill that lands right after the applause.

That advice is incomplete at best. I'd argue it's flat-out backwards. The problem isn't that companies can't launch AI anymore. They can. A 2026 Harvard Business School Online summary of McKinsey research said 88% of organizations already use AI in at least one business function. So let's stop pretending go-live is the hard part. I've seen teams stand something up by Friday and spend the next 90 days explaining why nobody uses it on Tuesday.

The missing piece is sequence.

Not excitement. Not tool count. Not how many pilots you can cram into legal, support, and operations before Q3 ends.

Sequence.

That's where most programs break. They scale before they've earned it. They confuse activity with evidence, then label the rework "transformation" like a nicer name fixes the damage. Smart teams don't usually stall because they're too cautious. They stall because they skipped the order of operations and production exposed them.

EY gets closer than most firms do on this. Real AI transformation isn't one automated task and a victory lap. It's moving toward AI-driven services and outcomes, connecting decisions across the customer journey, and keeping humans in the loop where they actually need to be. That doesn't happen in one dramatic leap. It happens in stages, because each stage teaches something different, and if you miss the lesson early, the later failure gets more expensive.

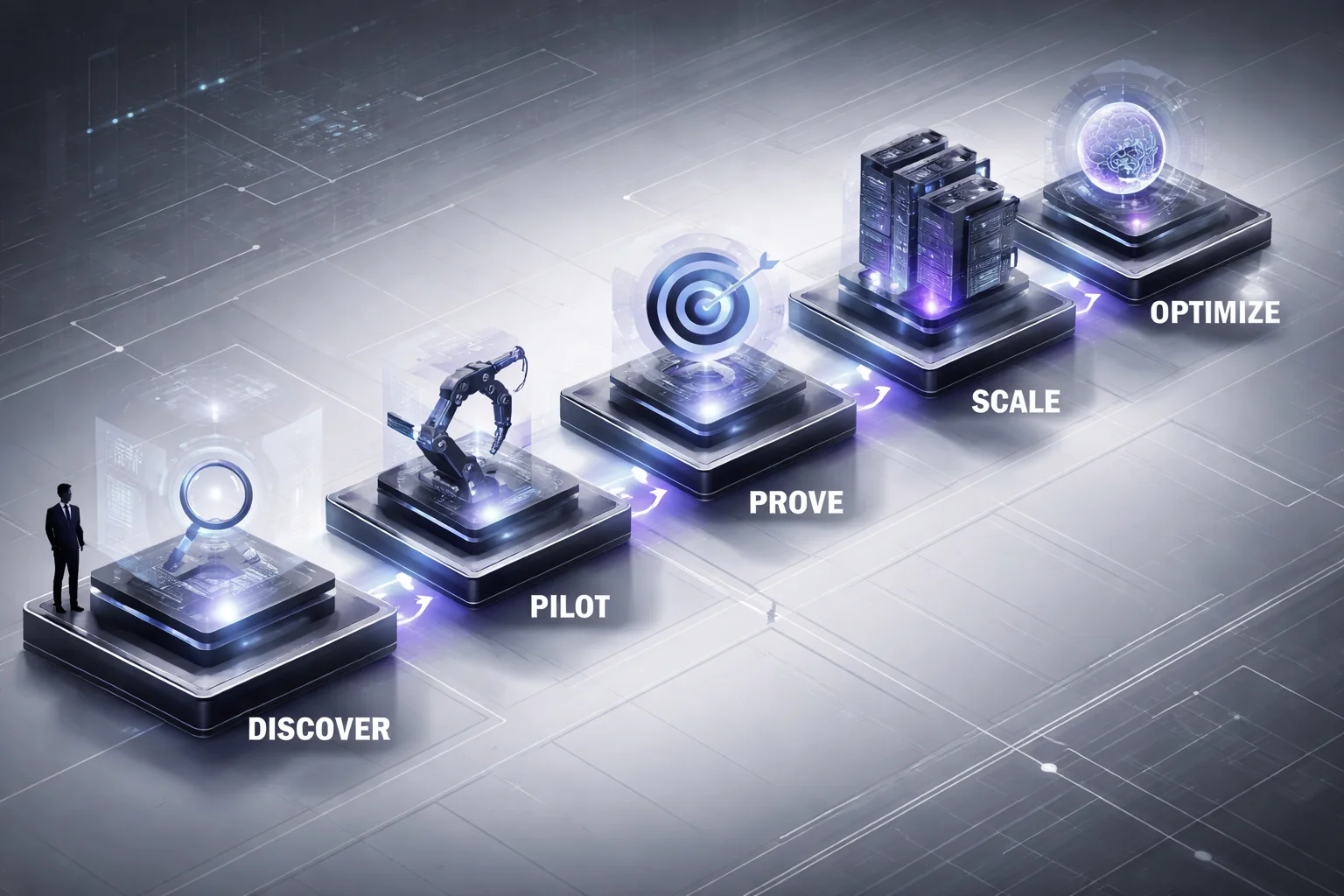

My version for Sustainable Ai Digital Transformation Services is plain on paper: five phases, one narrow objective each, one success metric, one decision gate. Plain isn't easy. Ask the company that rolls out three separate assistants across procurement, legal review, and customer support, then ends up with three dashboards showing three different "accuracy" numbers and no shared definition of success.

1. Discover

This isn't launch mode. It's selection mode.

You pick one workflow where better decisions or shorter cycle time would show up in money, errors, or hours saved. One workflow. Not a theme. Not "knowledge work." Something concrete enough that people can point to today's performance without arguing for 40 minutes in a conference room.

The metric here is a documented baseline: cost, error rate, cycle time, revenue impact—whatever actually matters in that workflow. The gate is data readiness. Not perfect data. Just data good enough to test without somebody rebuilding half the inputs in Excel at 11:38 p.m. If your team can't agree on current performance, stop there. You're not ready for AI; you're still trying to discover reality.

2. Pilot

A lot of teams treat pilot as proof of ambition. Wrong target.

The point is fit.

Take something contained, like a contract review assistant inside one legal sub-process with human approval on every output. That's useful because it surfaces real friction without pretending you've solved enterprise law overnight. A solid success metric might be 20% faster review time with no increase in escalation risk. That's specific enough to matter.

The gate is trust: do people come back and use it again next week without an executive hovering nearby? That's the test I care about most early on. If usage collapses the second leadership stops watching, your pilot did teach you something—it just wasn't what was promised in the kickoff deck.

3. Prove

This is where nice demos go to die.

You find out whether value survives controls: governance, MLOps, auditability, exception handling, ownership inside an actual AI operating model instead of a vague box on an org chart.

The metric is repeatable value under real controls, not under demo conditions with hand-held data and forgiving users. The gate is blunt: can you explain why it works, where it fails, and who takes over when it fails? If those answers get slippery, don't scale it. I've watched teams try anyway. It never gets prettier later.

4. Scale

People love this word too much.

Scale should mean controlled replication into adjacent teams or workflows after you've shown the thing transfers cleanly. Nothing heroic here. No all-hands miracle push.

The metric is simple: deployment two and deployment three should hit target value faster than deployment one did. If they don't, you haven't built a scaling model; you've rebuilt a custom project three times.

The gate is whether your training approach, support model, and enterprise AI change management process travel across functions without collapsing into dependency on one superstar manager answering Slack at 10:47 p.m. If that's still required, you're not scaling anything except stress.

5. Optimize

This phase gets ignored because it's less glamorous than launch photos and internal announcements.

It's also where systems either learn or quietly rot.

You need drift checks, retraining discipline, user feedback inside day-to-day work, and real learning loops that improve outcomes before decay shows up in some quarterly review deck three months late. The metric is ongoing gains over time—not static performance from month one screenshotted forever.

The gate is operational reality: have you built continuous learning into daily work or are you still pretending governance lives in PowerPoint? I think too many teams choose PowerPoint because it's easier to circulate than accountability.

One more number people should sit with: Thomson Reuters said its 2026 report drew from more than 1,500 respondents across 27 countries. That's not one Silicon Valley habit or one overcaffeinated conference trend talking to itself. Adoption is spreading fast almost everywhere.

Mature transformation still doesn't come from enthusiasm alone. It comes from sequence.

If you do all five phases well, what you've really built isn't just an AI system anyway. It's organizational patience with teeth—something rare enough to matter now more than another rushed rollout ever will. How many companies are actually willing to do that before chasing scale again?

Designing Learning Loops into AI Programs

Ten weeks in, the room always gets quieter.

I’ve watched this happen after a flashy AI launch: week one is packed, leaders are posting screenshots in Slack, somebody says adoption is “ahead of plan,” and by week ten the support team has a private workaround, ops is fixing outputs by hand, and nobody wants to be the first person to say the thing out loud — the system shipped, sure, but it didn’t actually learn.

7%. That’s the number I keep coming back to. A 2026 Harvard Business School Online summary of McKinsey research says 31% of organizations are scaling AI efforts, while only 7% are getting value from widespread deployment.

I think that gap is the whole problem. Companies aren’t failing to launch AI. They’re launching plenty of it. They’re failing to improve it after launch. Expansion gets mistaken for progress all the time, and I’d argue that’s how expensive demos turn into stale systems.

You can buy a solid model. You can stand up clean infrastructure. You can get a burst of internal adoption because leadership wants visible wins by next quarter and employees are curious enough to click around. None of that proves you have an AI transformation strategy. It proves something worked once, under friendly conditions, with extra attention and more patience than real operations ever get.

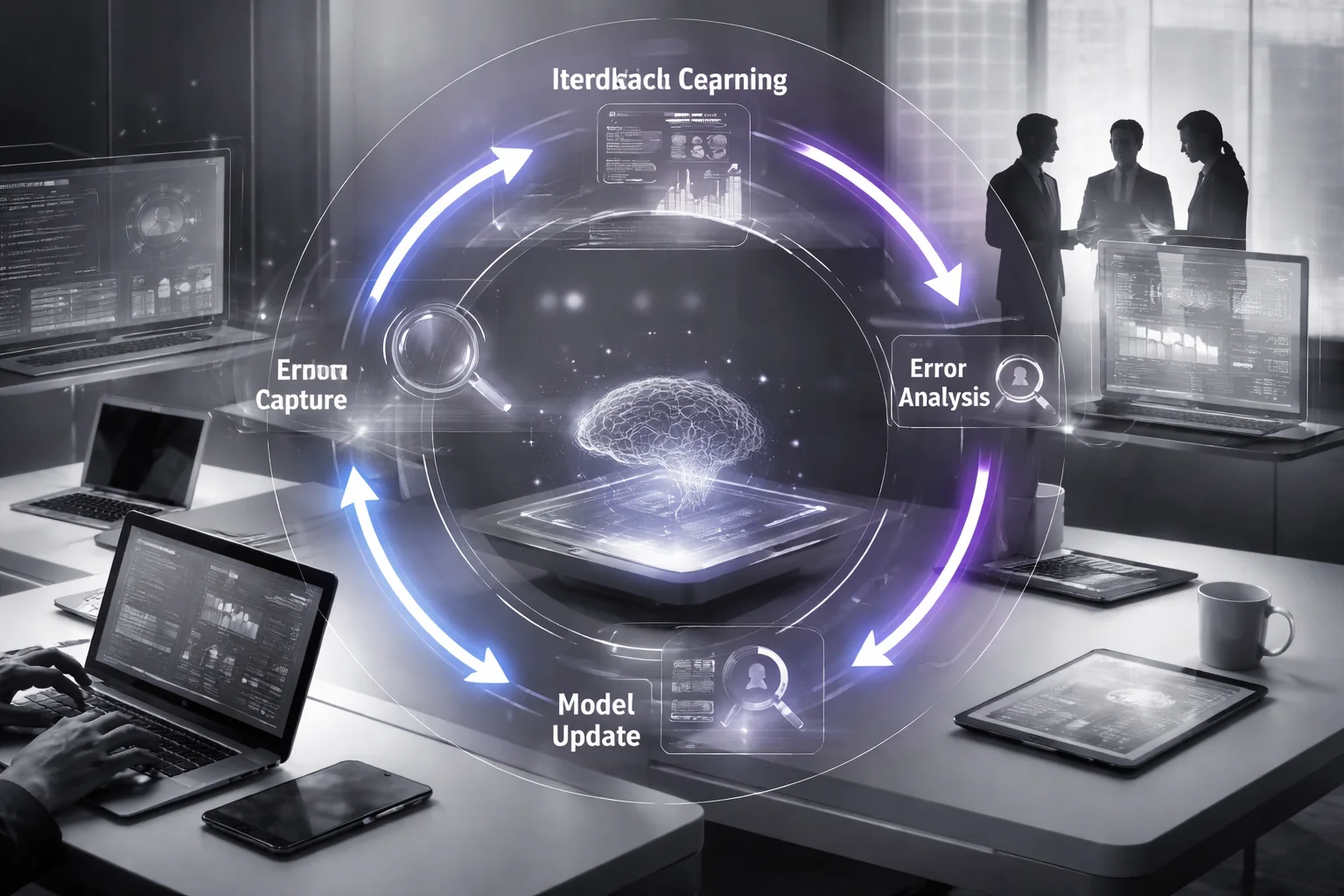

The thing that matters sits right in the middle: AI learning loops. Every implementation stage should produce evidence, not just output. If feedback lives in email threads, side chats, random spreadsheets, or a form people ignore after day three, you won’t get adaptive AI systems. You’ll get status meetings and blame.

The loop itself isn’t mysterious. It needs four parts.

- Feedback capture: collect user actions inside the workflow itself, not in a separate survey nobody opens. If someone reviews an AI draft in Salesforce, ServiceNow, or an internal tool, they should be able to mark draft quality, escalation reason, and confidence level right there.

- Error analysis: sort failures by type, source, and business impact. Was retrieval bad? Was the data stale? Did the prompt structure break down? Was policy unclear? Did the user misuse the tool?

- Human review: route edge cases to people who can fix both the answer and the process behind it. Human-in-the-loop isn’t there for show. It creates labeled learning signals you can actually use.

- Model or workflow updates: change the system based on what you learned. Retraining might help. A lot of the time, though, the better move is a workflow redesign, clearer escalation rules, or tighter data readiness standards.

This is where an AI operating model either turns real or gets exposed as a fancy org chart. Someone has to own review thresholds. Someone has to own model governance. Someone has to decide whether MLOps pushes an update or operations changes a business rule instead.

Customer service makes this obvious fast. A 2026 Haposoft report says FPT uses AI to handle around 70% of customer service inquiries. Big number. Sounds like a clean automation win until you think about what it takes to hold that line without wrecking trust. You need constant learning from unresolved tickets, handoff reasons, repeat contacts, and agent corrections. Skip that for even 30 days and drift starts creeping into the places leadership usually notices last: frustrated customers, repeat volume climbing week over week, ugly escalations no dashboard framed as “efficiency” can hide.

The pressure isn’t easing up either. A 2026 Thomson Reuters report found organization-wide AI usage nearly doubled in a year, and professionals increasingly expect AI to affect jobs, billing, revenue, and role design.

That changes what companies actually need. Yes, maybe you need AI transformation services. What you really need is a program that learns faster than your business changes.

The model isn’t the product anymore. The learning loop is. Build that part well or you’re just rolling out software and hoping people stay impressed.

Adaptation Mechanisms That Keep Transformation on Track

40%. That's the share of enterprise apps a 2026 Haposoft report says will include task-specific agents, up from under 5% in 2025. I think that number should make people a little uneasy, not excited, because every new agent is another place silent failure can sit quietly until somebody important notices too late.

That's the part the neat rollout slides miss. Everybody loves the clean sequence: roadmap, use case, careful launch, progress review, scale. Looks great in a deck. Falls apart fast once real work shows up messy, uneven, and full of exceptions nobody mentioned in kickoff.

I saw that by week six on a document review assistant. Pilot metrics looked fine at first. Then one regional team started feeding it contracts with different language patterns, exception volume jumped, and legal got nervous almost immediately. Not because the model had suddenly become useless. Because nobody had decided in advance what to do when confidence dropped below the comfort line. So every odd contract turned into a debate, and debates are a terrible operating model.

The core issue isn't model quality by itself. It's rigidity. A good AI program can't stay fixed once it hits production. Scope has to move. Control levels have to change. Rollout speed has to slow down or speed up based on evidence instead of optimism. I'd argue most transformation plans still get this wrong because they treat adaptation like cleanup work after launch, not part of the design from day one.

- Rigid program: fixed scope, preset automation targets, monthly reviews, governance bolted on after launch.

- Adaptive program: variable scope, staged automation depth, weekly exception reviews early on, model governance built into operating decisions from day one.

People call the second version slower. I don't buy that. It's usually faster because it stops wasting time pretending edge cases are rare. I've watched teams burn three weeks arguing over 17 misrouted contracts that should've triggered human review on day one. That's not speed. That's theater.

Boston Consulting Group has been unusually direct here: AI transformation is workforce transformation. Good. It should be said that plainly. This isn't soft culture talk detached from operations; it's operations itself. People learn new systems inside live daily work, under pressure, with deadlines still moving and customers still waiting. Not in a training room with polished slides and fake examples.

That's why stakeholder review can't live off to the side as some monthly governance ceremony. Team leads, risk owners, ops managers, and end users need to be looking at failure patterns close to the workflow itself and making calls together: widen scope, tighten controls, or pause expansion. If that sounds less glamorous than "scale fast," that's because it is.

You also need safeguards written before launch, not after somebody panics on a Friday afternoon.

- Rollback plans: define exactly what sends work back to human-only handling, such as rising error rates for two straight weeks or unresolved policy violations.

- Exception handling: route ambiguous cases to trained reviewers with clear SLAs and labeled outcomes so the system keeps learning from real edge cases.

- Deployment cadence rules: don't expand every sprint just because engineering can; expand only when performance baselining holds across teams and data readiness is consistent.

- Model monitoring: use MLOps for drift checks, approval logs, threshold alerts, and version control tied directly to business risk.

The risk isn't hypothetical either. Thomson Reuters reported in 2026 that 50% of lawyers called AI a major threat tied to unauthorized practice concerns, up from 36% in 2025. That's not generic resistance. That's regulated professionals telling you trust disappears fast when capability grows quicker than governance.

If you want the practical version of that people-and-process problem, AI transformation consulting for organizational change gets into it well. Real AI transformation strategy isn't about pushing harder all the time. It's knowing when not to push at all. So before you expand anything, have you actually decided what evidence would make you stop?

How Buzzi AI Delivers Adaptive AI Transformation

I watched a leadership team try to roll out AI across half a company in one shot. Looked great in the boardroom. Slick deck, aggressive timeline, lots of talk about transformation. Six months later? Confused teams, stalled adoption, and the usual blame parade by Q3.

That's the mistake. People think speed saves ROI. It doesn't. They think bigger scope creates momentum. It usually creates chaos. I'd argue the big-bang launch is often just impatience dressed up as confidence.

The failure pattern isn't mysterious. The Project Management Institute has said this pretty plainly: AI-driven change works when there's an actual framework behind it, one that lines up employee commitment, motivation, and the nuts-and-bolts mechanics of transformation. Miss that, and you get exactly what you'd expect — disengaged employees, turnover, missed targets, and tense meetings where nobody wants to own the outcome.

Buzzi AI takes the opposite route with its AI transformation services. Smaller start. Staged rollout. Learn fast. Expand only when the evidence says it's working, not because an executive wants a louder launch at the next quarterly review.

Here's the framework.

First: data readiness and a clear AI operating model. One workflow. One owner. One baseline. That's where Buzzi starts, because if nobody knows who owns the process or what "better" means, you're not transforming anything — you're just adding software.

Second: staged AI implementation with model governance and MLOps built in early. Early matters. I've seen what happens when companies leave governance for later: around week 10 or 12, risk teams start firing off those lovely Thursday afternoon emails asking who approved the model, where the controls are, and why nobody can explain output drift.

Third: treat the pilot like a signal generator, not a vanity project. A pilot should produce useful signal. That signal feeds AI learning loops. Those learning loops shape workflow changes, user training, control thresholds, and eventually stronger adaptive AI systems. Buzzi doesn't shove continuous learning onto some future roadmap slide and call it strategy. It gets folded into weekly decision reviews and improvement cycles from day one.

That's how this turns into commercial results instead of theater. A 2026 Haposoft report found that 58% of businesses saw cost reductions from automation and fewer operational errors. Real gains. Less rework. Earlier detection of failure patterns. Expansion based on proof that a system can hold up under actual operating conditions.

And yeah, staged transformation isn't flashy. That's part of why companies resist it. They're still hooked on launch moments — the announcement, the internal buzz, the illusion that scale on paper means success in practice.

Buzzi doesn't sell that fantasy.

It builds a system that keeps earning trust after launch.

Less drama. Better odds.

That's how ROI survives.

Where this leaves us

AI transformation services work when you stage them like an operating change, not a software rollout, building proof, controls, and learning loops before you push for scale.

Your next move is simpler than most teams make it. Start with one high-friction workflow, set performance baselining before launch, and make model governance, drift monitoring, and human-in-the-loop review part of the design from day one. Then watch the boring stuff, because that's where programs either hold or crack: data readiness, cross-functional ownership, feedback loops, and whether your pilot-to-production roadmap actually earns the next stage.

Most people get this wrong by treating AI transformation services as a faster way to deploy tools across the business. The better way to think about it is as a staged system for helping your company learn, decide, and improve without losing control.

FAQ: AI Transformation Services

What are AI transformation services?

AI transformation services help you move from isolated AI experiments to AI embedded across operations, products, and decision-making. That usually includes strategy, data readiness, pilot design, model governance, MLOps, workflow redesign, and enterprise AI change management, because the tech is only half the job.

Why do big-bang AI transformations fail?

They fail because most companies try to scale before they’ve proved value, fixed data issues, or built a workable AI operating model. According to a 2026 Harvard Business School Online summary of McKinsey research, 88% of organizations use AI in at least one business function, but only 31% are scaling and just 7% are seeing value from widespread deployment, which tells you the jump from pilot to production is where things break.

How does a staged AI implementation framework work?

A staged AI implementation starts with one bounded use case, sets performance baselining, then expands only after the team proves business impact and operational stability. In practice, you move through readiness, pilot, controlled production, and scalable AI rollout, with clear gates for data quality, risk management, human-in-the-loop review, and ownership at each stage.

How do AI learning loops improve results over time?

AI learning loops turn production usage into better models instead of stale models. You collect user feedback, monitor output quality, track drift monitoring signals, and feed that back into retraining or rule updates, so the system keeps learning from real work rather than freezing at launch.

Which adaptation mechanisms keep adaptive AI systems on track?

The big ones are drift monitoring, threshold alerts, fallback rules, human review queues, retraining triggers, and versioned deployment through MLOps. Adaptive AI systems need those controls because performance degradation rarely announces itself loudly, it usually shows up as small misses, then compounds.

Can AI transformation services include MLOps and governance?

Yes, and they should. If your AI transformation services stop at strategy decks or prototypes, you’re buying theater, not capability, because model governance, audit trails, deployment pipelines, access controls, and monitoring are what make AI usable in an enterprise setting.

Does enterprise AI transformation require change management?

Absolutely. Boston Consulting Group makes the point plainly: AI transformation is also workforce transformation, which means adoption depends on training, leadership behavior, and how new work actually gets done day to day. If teams don’t trust the system, know when to override it, or see how it fits their KPIs, the rollout stalls.

What should be included in an AI transformation roadmap?

A good roadmap covers business goals, use-case sequencing, data readiness, architecture choices, governance rules, success metrics, and a pilot-to-production roadmap with stage gates. It should also define cross-functional AI teams, budget assumptions, risk management steps, and how process automation with AI connects back to revenue, cost, or service KPIs.